MediaPipe Iris: Detecting Key Points in the Eye

This is an introduction to「MediaPipe Iris」, a machine learning model that can be used with ailia SDK. You can easily use this model to create AI applications using ailia SDK as well as many other ready-to-use ailia MODELS.

Overview

MediaPipe Iris, released by Google in August 2020, is a machine learning model for detecting keypoints in a person’s eye. It can be used to recognize where you are looking, estimate distance based on eye size, detect if you are sleeping, etc.

Source:https://ai.googleblog.com/2020/08/mediapipe-iris-real-time-iris-tracking.html

Architecture

MediaPipe Iris consists of the following three models.

- BlazeFace for detecting the position of a face

- FaceMesh for detecting keypoints of the face (see details here)

- MediaPipe Iris for detecting eye keypoints.

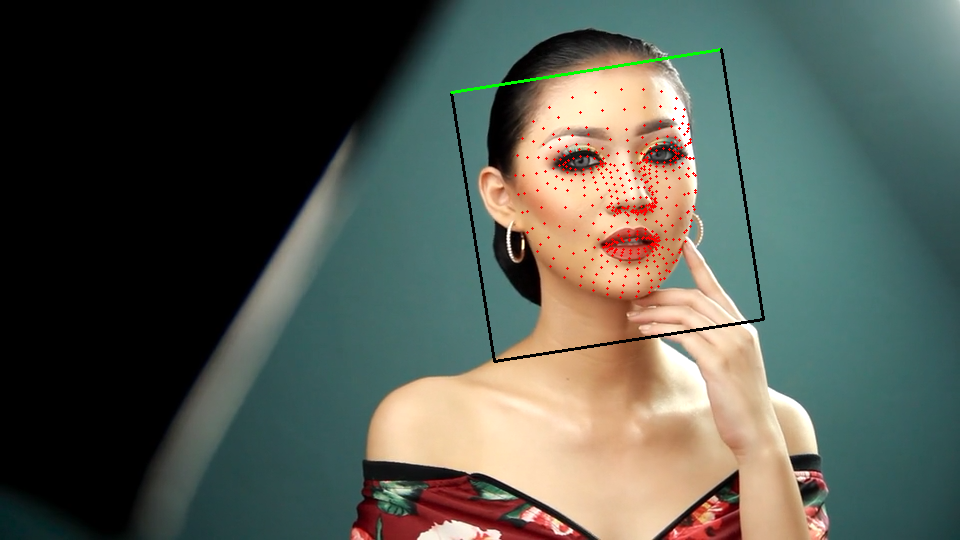

First, BlazeFace is used to detect the position of the face in the input image, then FaceMesh is used to compute 468 keypoints on the detected face, and finally the position of the eyes are computed.

Input image(Source:https://pixabay.com/ja/videos/%E5%A5%B3%E6%80%A7-%E3%83%A4%E3%83%B3%E3%82%B0-%E8%B1%AA%E8%8F%AF%E3%81%A7%E3%81%99-%E8%A1%A8%E7%8F%BE-32387/)

Face position and keypoints

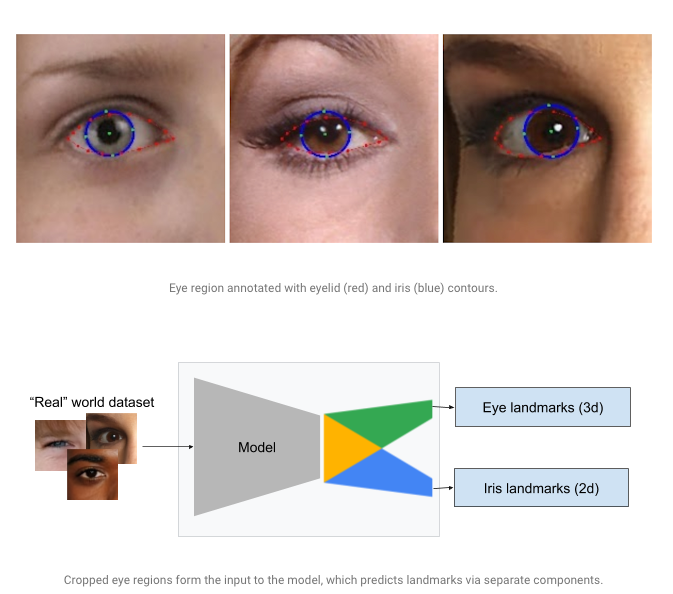

For the detected eye image, MediaPipe Iris detects 71 eye key points and 5 pupil key points.

Key points of the pupil

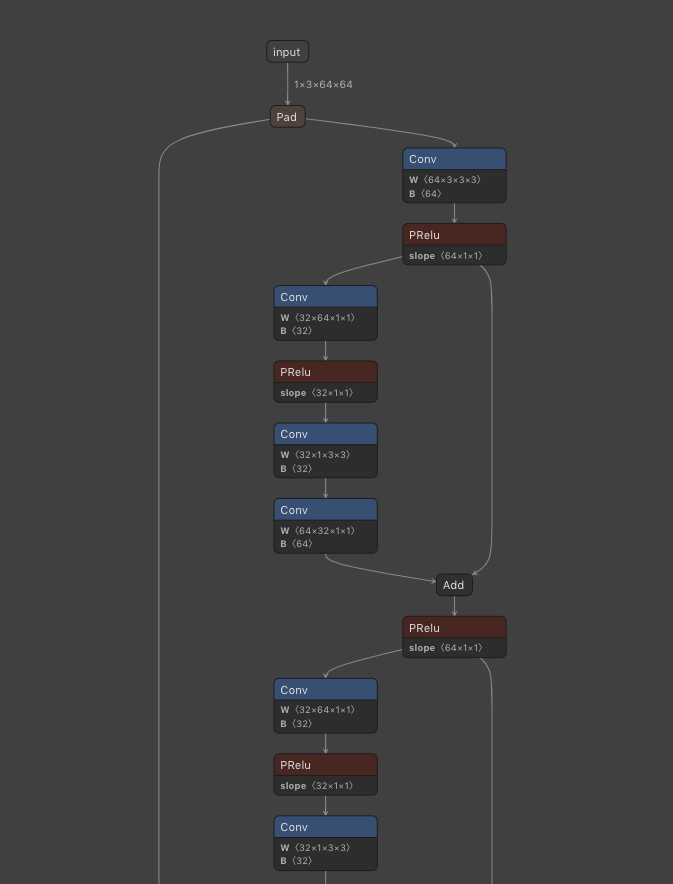

The model architecture of MediaPipe Iris is based on MobileNet, which is a combination of Convolution, DepthwiseConvolution, and PRelu. The input image size is 64x64.

Netron visualization of MediaPipe Iris

Usage

You can use the following command to run the model on the web camera video stream.

$ python3 mediapipe_iris.py --video 0

ailia Inc. has developed ailia SDK, which enables cross-platform, GPU-based rapid inference.

ailia Inc. provides a wide range of services from consulting and model creation, to the development of AI-based applications and SDKs. Feel free to contact us for any inquiry.

ailia Tech BLOG

ailia Tech BLOG