FuguMT : Machine Learning Model for English to Japanese Translation

This is an introduction to「FuguMT」, a machine learning model that can be used with ailia SDK. You can easily use this model to create AI applications using ailia SDK as well as many other ready-to-use ailia MODELS.

Overview

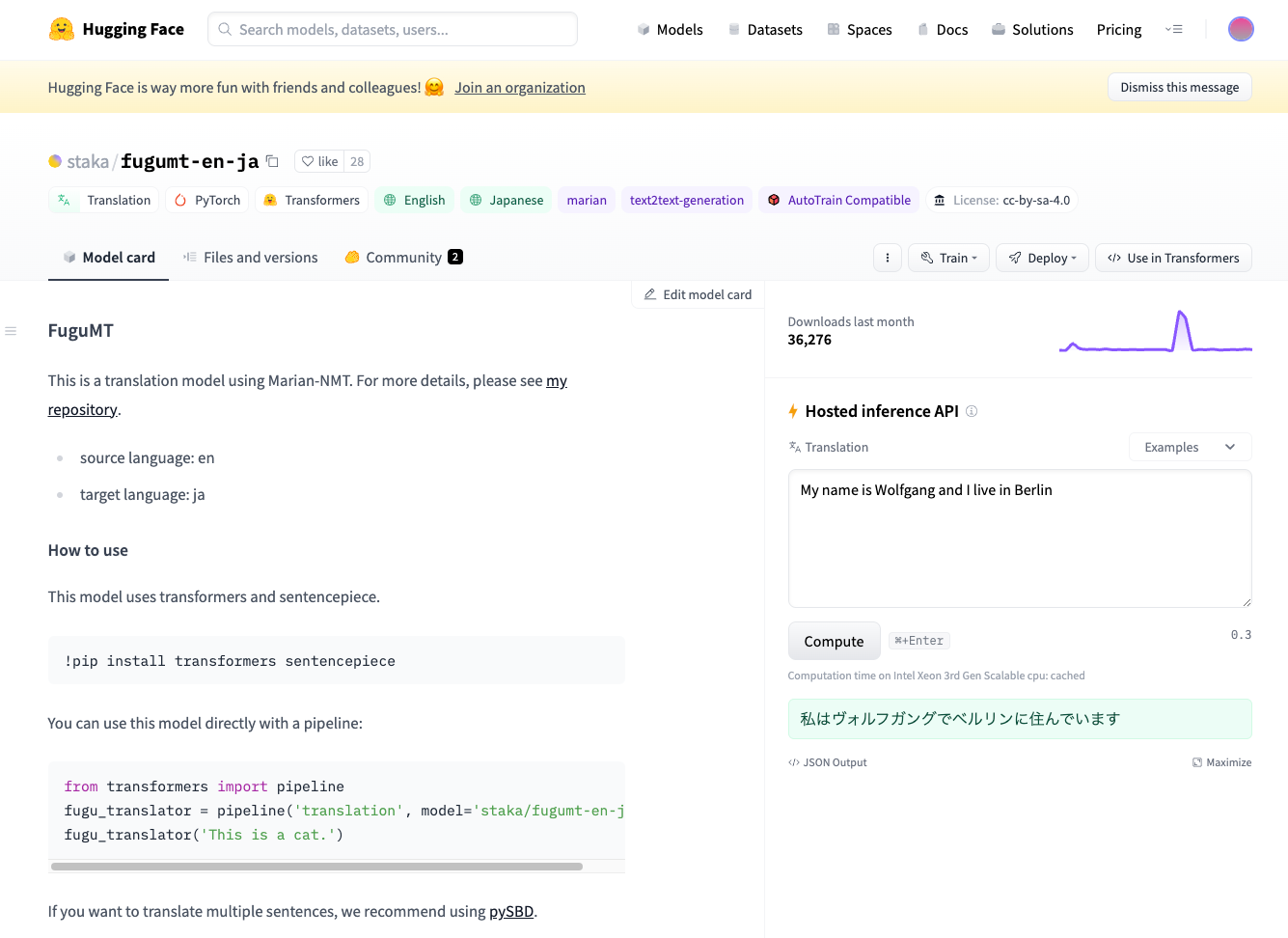

FuguMT is a language model for Japanese translation based on MarianMT, a framework developed by Microsoft for machine translation. FuguMT is able to translate English text to Japanese, under a CC-BY-SA-4.0 license.

FuguMT

GitHub — s-taka/fugumtgithub.com

Dataset

The FuguMT training data can be found in the blog below (Japanese only). The dataset contains about 6.6 million bilingual pairs (Japanese: 690MB English: 610MB, about 100 million words), and the training was conducted for about 30 hours using Marian-NMT + SentencePiece on AWS p3.2xlarge.

The BLEU (BiLingual Evaluation Understudy) score is 31.65, higher than GPT3.5’s 27.04 and GPT4’s 29.66.

ぷるーふおぶこんせぷとGPT-4の翻訳性能を外務省WEBサイトのテキスト(日本語/英語)を用いて定量的[1]に測ってみた。…staka.jp

Architecture

FuguMT is a transformer-based Sequence2Sequence model. The output can be obtained one token at a time by iterating through the decoder.

Decoder inputs are input_ids, attention_mask, decoder_input_ids, and past_key_values[25]. input_ids are the input token sequence, attention_mask is a vector of 1s, decoder_input_ids are the token IDs from the previous iteration (pad=32000 initially), and past_key_values are internal states of size (beam_size, 8, 0, 64), with the ‘0’ part increasing with each inference.

The decoder outputs logits and past_key_values[25], where logits are 32001-dimensional, containing the probability of each token. Text is determined via beam search based on logits. In the Python version, the default beam_size is 12.

The tokenizer used is MarianTokenizer, which is a SentencePiece model, employing English source and Japanese target models for input and output, respectively.

Usage (Python)

To use FuguMT from ailia SDK in Python, use the following command

$ python3 fugumt-en-ja.py --input "This is a cat."

The output translation woule be:

translation_text: これは猫です。

Usage (C++)

A sample of using FuguMT in C++ with ailia Tokenizer is also available below.

Below is the build process and a running sample.

cd fugumt

export AILIA_LIBRARY_PATH=../ailia/library

export AILIA_TOKENIZER_PATH=../ailia_tokenizer/library

cmake .

make

./fugumt.sh

env_id : 0 type : 0 name : CPU

env_id : 1 type : 1 name : CPU-AppleAccelerate

env_id : 2 type : 2 name : MPSDNN-Apple M1 Max (Warning : FP16 backend is not worked this model)

you can select environment using -e option

selected env name : CPU-AppleAccelerate

Input : This is a cat.

Input Tokens :

183 30 15 11126 4 0

Output : これは猫です

Output Tokens :

517 6044 68 0

Program finished successfully.

ailia Inc. has developed ailia SDK, which enables cross-platform, GPU-based rapid inference.

ailia Inc. provides a wide range of services from consulting and model creation, to the development of AI-based applications and SDKs. Feel free to contact us for any inquiry.

ailia Tech BLOG

ailia Tech BLOG