DeepSpeech2 : A machine learning model for speech recognition

This is an introduction to「DeepSpeech2」, a machine learning model that can be used with ailia SDK. You can easily use this model to create AI applications using ailia SDK as well as many other ready-to-use ailia MODELS.

Overview

DeepSpeech2 is an end to end speech recognition model proposed in December 2015. It is capable of outputting English text from audio speech as input.

Architecture

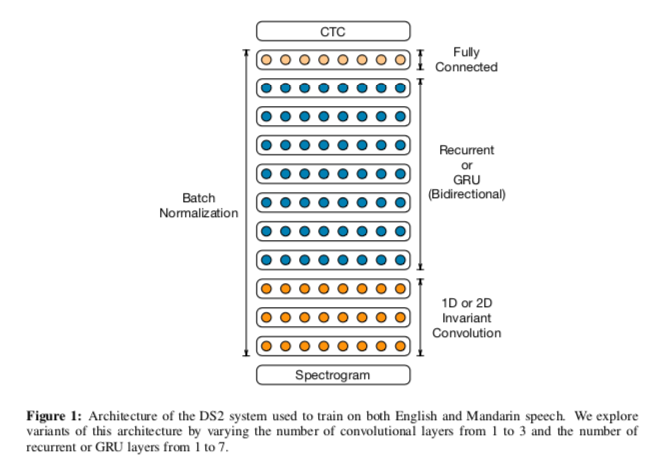

DeepSpeech2 converts the input speech into Melspectrograms, then applies CNN and RNN, and finally outputs the text using Connectionist Temporal Classification (CTC).

Source:https://arxiv.org/abs/1512.02595

Connectionist Temporal Classification (CTC) is a method often used in character recognition and speech recognition, in combination with LSTM and RNN. In character and speech recognition, the width of a single character and the time length of a single phoneme are variable. This method solves the problem of variable width and time length of a phoneme by erasing the same character in succession on the decoder side.

Usage of language models

By correcting the output of CTC with a language model, we can make the text more natural. The following library is used for cctcdecode with language model.

The language model itself can be downloaded from the link below.

In CTC decoding with language models, the probability of occurrence of a word is calculated as the sum of all possible patterns. To make this calculation efficient, dynamic programming is used.

DeepSpeech2 dataset

DeepSpeech2 has been trained on AN4, Librispeech, and TEDLIUM.

AN4 is a small 16 kHz data set created by CMU in 1991.

CMU Sphinx Group — Audio DatabasesEdit descriptionwww.speech.cs.cmu.edu

Librispeech contains 1000 hours of speech at 16 kHz retrieved from audiobook

TEDLIUM contains approximately 118 hours of speech at 16 kHz using TED Talk.

DeepSpeech2 usage

To use DeepSpeech2 with the ailia SDK, use the following command.

$ python3 deepspeech2.py -i input.wav

To use the language model, use the -d option. You need to install the cctcdecode library and download the language model 3-gram.pruned.3e-7.arpa beforehand.

$ python3 deepspeech2.py -i input.wav -d

Example of DeepSpeech2 output

Use the following material.

Here is the result using the language model.

what somebody decides to break it be careful that you keep angular coverage but look for places to save money ninety is taking longer to get things squared away than the banker's expected during the life for once company may win her taxied retirement and count de bust telle but inadequate new self to seeming rags or hurriedly tolson the two naked bone to want o discussion cannons thou when the title of this type of than is in question or to dying or waxing or gassing tete debrett may be personalized known by a clays leather horn lace work on a flat surface and smooth out a simples tinto separate system uses a single self contained in it the old chap an ad still hold a good mechanic is usually a bad but so figures would do her in lady years we make beautiful chares canet chesnel's etcher'

And below is the result without using the language model.

wha i somebody decides to break it he careful that you keep anquhaod coverage but look for places to save monyniete its taking longer to get things squired away than the bankers expected liring the life for once comnpany my win her taxited retireent and comnt debouse ta telple but inadequate new self to seeming rags ore hurridly tos on the two naked bone to want o discussion cannins shou when the title of this type of thol is in questions ors o dying or waxing orgassingtete dibrualight may be persoaaised known by o clays leather horne lace work on a flat surface and smooth out a siples tiing to separate system useas a single sof contained un it the old chup an ad still hold a good mechanic is usually a bad bot fo figures would no her in lady years o make beautiful chaires camnets ches dol houses ed cheter

The result obtained using the language model is a lot more natural.

ailia Inc. has developed ailia SDK, which enables cross-platform, GPU-based rapid inference.

ailia Inc. provides a wide range of services from consulting and model creation, to the development of AI-based applications and SDKs. Feel free to contact us for any inquiry.

ailia Tech BLOG

ailia Tech BLOG